問題

分散トレースを有効にしましたが、期待したデータがNewRelicの分散トレースUIに表示されません。

始める前に

トラブルシューティングを実行する前に、次のことが役立つ場合があります。

分散トレーシングがどのように機能するかに関する技術的な詳細を確認してください。

インストゥルメンテーション ステータス アプリを使用して、サンプリング レートを含むトレース インストゥルメンテーションの概要を確認します。 これは、欠落したスパンと断片化されたトレースを理解するのに役立ちます。 これを見つけるには、

one.newrelic.com > All capabilities > Apps > Distributed tracing instrumentation status

にアクセスしてください。

ソリューション

トレース データが欠落する原因と解決策をいくつか示します。

有効化またはインスツルメンテーションに関する問題

分散トレースでトレース内のすべてのノードの詳細をレポートするには、分散トレースを有効にしたNewRelicエージェントで各アプリケーションを監視する必要があります。

アプリケーションのNewRelicアカウントで分散トレースが有効になっていない場合、次の問題が発生します。

- その分散トレースUIページにはデータがありません。

- 他のアカウントの分散トレースにデータを報告することはありません。

New Relicが自動的に計測するアプリケーションとサービスの分散トレースを有効にすると、通常、分散トレースUIにそれらのノードの完全で詳細なデータが表示されます。

ただし、一部のサービスまたはアプリケーションがトレースから欠落していること、または欠落していると予想される内部スパンがいくつかあることに気付く場合があります。その場合は、アプリケーションまたは特定のトランザクションのカスタムインストルメンテーションを実装して、トレースで詳細を確認することをお勧めします。これを行う必要がある場合のいくつかの例:

Transactions not automatically instrumented

。アプリケーションが自動的にインストゥルメントされていることを確認するには、使用している New Relicエージェントの 互換性と要件に関するドキュメント をお読みください。アプリケーションが自動的にインストゥルメントされなかった場合、または特定のアクティビティのインストゥルメントを追加する場合は、 「カスタムインストゥルメント」を参照してください。

All Go applications

。 Go エージェントは、他のエージェントとは異なり、コードの手動インストゥルメンテーションを必要とします。 手順については、 「Go アプリケーションを設計する」を参照してください。

A service doesn't use HTTP

。サービスが HTTP 経由で通信しない場合、 New Relicエージェントはディストリビューティッド(分散)トレーシング ヘッダーを送信しません。 これは、一部の非 Web アプリケーションまたはメッセージキューの場合に当てはまる可能性があります。 これを解決するには、ディストリビューティッド(分散)トレーシングAPIを使用して、呼び出し側アプリケーションまたは呼び出し先アプリケーションのいずれかを計算します。

スパンの問題

エージェントがトレース オブザーバーに十分な速度でデータを書き込めない場合、queue_size はエージェントが保持するスパンの数を制限する追加のAPMエージェント設定です。 エージェントについては次の例を参照してください。

.NET構成方法 | 例 |

|---|---|

構成ファイル | |

環境変数 | |

Pythonの設定方法 | 例 |

|---|---|

構成ファイル | |

環境変数 | |

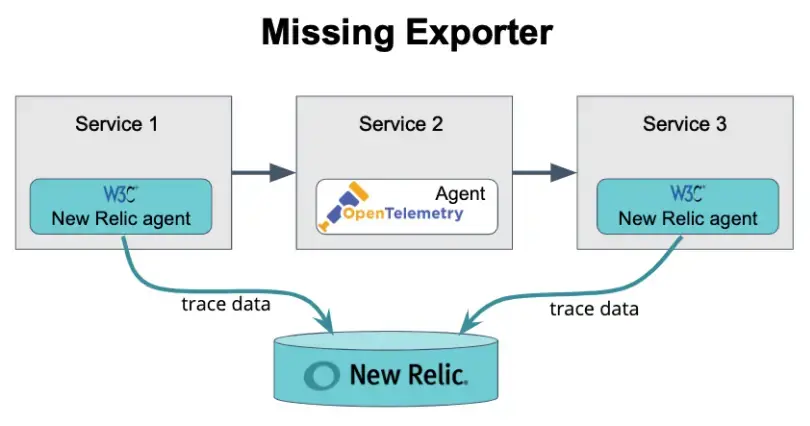

ヘッダーの伝播は成功することがありますが、スパン情報がNewRelicに送信されません。たとえば、OpenTelemetryにNew Relicエクスポーターが装備されていない場合、スパンの詳細がNewRelicに到達することはありません。

この図では、ヘッダーの伝播は成功していますが、スパンをNewRelicに送信するためのエクスポーターがService2に設定されていないことに注意してください。

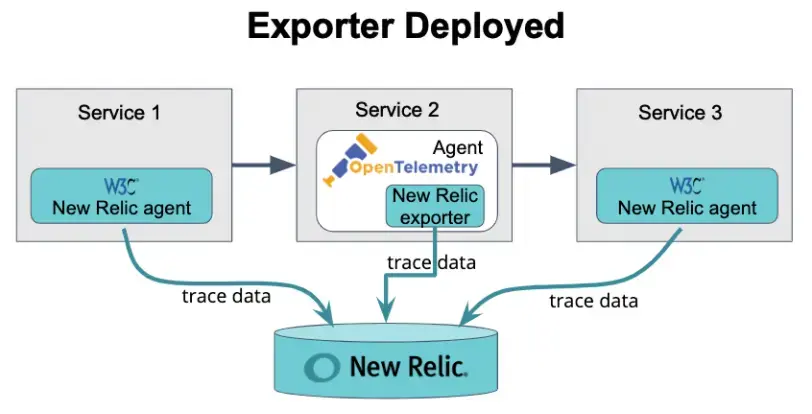

次の図もヘッダーの伝播が成功したことを示していますが、スパンの詳細をNew Relicに送信するサービス2のエクスポーターが含まれています( Trace APIを参照)。

の標準ディストリビューティッド(分散)トレーシングでは、 適応サンプリングを使用します。 これは、サービスへの呼び出しの一定の割合が、ディストリビューティッド(分散)トレーシングの一部として報告されることを意味します。 サービスへの特定の呼び出しがサンプリング対象として選択されていない可能性があります。

収集および表示できるスパンの数には制限があります。アプリケーションが1回の呼び出しに対して非常に多くのスパンを生成する場合、そのハーベストサイクルでのAPMエージェントのスパン収集制限を超える可能性があります。これにより、スパンが失われ、エージェントが完全にサンプリングしてレポートできるトレースの数が大幅に制限される可能性があります。

現在、一度に表示されるスパンは10,000のみです。

トレース インデックスにキャプチャされるようにするには、過去 60 分以内にスパンを送信する必要があります。60 分より前で 1 日より新しいスパンを送信した場合でも、スパン データは書き込まれます。ただし、分散トレース UI のトレース リストを制御するトレース インデックスには含まれません。スパンのタイムスタンプが 1 日より古い場合、そのスパンは削除されます。これは、システム間または長時間実行されているバックグラウンド ジョブ間にクロック スキュー (タイミングの違い) がある場合によく発生します。

重要

OpenTelemetry Protocol (略して OTLP) 経由で送信された 60 分を超えるスパンはNRDBに書き込まれず、次の NrIntegrationError が生成されます。

The span timestamp cannot be older than 60 minutes.トレースの詳細に関する問題

プロキシおよびその他の仲介者に関するいくつかの潜在的な問題:

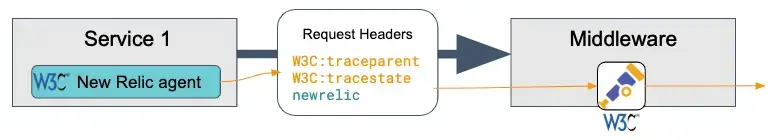

Incomplete trace. 一部の仲介者は、ディストリビューティッド(分散)トレーシング ヘッダーを自動的に伝播しません。 その場合、ヘッダーがソースから宛先に渡されるようにそのコンポーネントを構成する必要があります。 手順については、その中間コンポーネントのドキュメントを参照してください。

Missing intermediary in trace. 仲介者がNew Relic - モニターである場合は、その仲介者上で実行されているNew Relicエージェントによって生成または更新された

newrelicヘッダーが伝播されることを確認します。 これは、中間者が以前はトレースに表示されていたが、アップストリームbrowser (ブラウザー モニター アプリケーションなど) に対してディストリビューティッド(分散)トレーシングが有効にされた後に表示されなくなった場合に発生する可能性があります。ヒント

一部のエンティティがトレースデータを別のトレースシステムに報告する場合は、New Relic UIのトレースIDを使用して、他のトレースシステムで欠落しているスパンを検索できます。

チェーン内の各エージェントがW3Cトレースコンテキストをサポートしている場合は、スパンをつなぎ合わせて完全なトレースにすることができます。チェーンの一部が、W3CトレースコンテキストをサポートしていないZipkinなどのエージェントからのものである場合、そのエージェントからのスパンはトレースに含まれない可能性があります。

トレースに複数のNewRelicアカウントによって監視されているアプリケーションからのデータが含まれていて、ユーザー権限でそれらのアカウントにアクセスできない場合、スパンとサービスの詳細の一部がUIでわかりにくくなります。

たとえば、サービスにリンクされているアカウントにアクセスできない場合、分散トレースリストにサービス名の代わりに一連のアスタリスク(*****)が表示される場合があります。

トレースリストは、最初のスパンが受信されてから20分のウィンドウでキャプチャされるトレースインデックスによって生成されます。

通常、これは遅いスパンが原因です。

長いトレースでバックエンド時間が異常に短い場合は、送信されるタイムスタンプに問題がある可能性があります。

たとえば、ルートスパンはマイクロ秒をミリ秒として再投稿する場合があります。これは、ルートスパンがブラウザアプリケーションの場合にも発生する可能性があります。Webブラウザなどの外部クライアントを使用すると、クロックスキュー(タイミングの違い)が頻繁に発生する場合があります。

ブラウザアプリケーションの問題

一部の エージェントの古いバージョンは、 browserのディストリビューティッド(分散)トレーシングと互換性がありません。 browser 、互換性のないエージェントを実行しているAPMアプリケーションに AJAX 要求を送信した場合、 APMエージェントはその要求のトランザクションおよびスパン データを記録できない可能性があります。

ブラウザアプリケーションで分散トレースが有効になっていて、ダウンストリームAPM要求のトランザクションまたはスパンデータが表示されない場合は、分散トレース要件のブラウザデータを確認し、すべてのアプリケーションがサポートされているバージョンのAPMエージェントを実行していることを確認します。

トレースにエンドユーザースパンが欠落していると思われる場合は、ブラウザの分散トレース要件を読んで理解し、 手順を有効にしてください。

AJAX UIページには、そのトレースにエンドユーザー スパンが存在するかどうかに関係なく、ディストリビューティッド(分散)トレーシングUIへのリンクがあります。 スパンはどのようなデータによって生成されるかの詳細については、 「要件」を参照してください。

一部の エージェントの古いバージョンは、 browserのディストリビューティッド(分散)トレーシングと互換性がありません。 APMを含むトレースからbrowser APMスパンは一貫して欠落している場合は 、ブラウザ データのディストリビューティッド(分散)トレーシング要件 を参照し、すべてのアプリケーションが エージェントのサポートされているバージョンを実行していることを確認してください。

孤立したブラウザスパンの他の原因については、 ブラウザスパンレポートを参照してください。

その他の問題

考えられる原因:複数のアプリ名を持つアプリケーションの場合、 entity.name属性はプライマリアプリ名にのみ関連付けられます。他のアプリ名で検索するには、 appName属性を使用して検索します。

OpenTelemetry との統合に関する質問は、サポート フォーラムに寄せてください。

アクセスに影響を与えるその他の要因

New Relic機能にアクセスする能力に影響を与える可能性のある要因の詳細については、アクセスに影響を与える要因を参照してください。