We recommend creating an alert using a NRQL alert condition. This doc will guide you through formatting and configuring your NRQL alert conditions to maximize efficiency and reduce noise. If you've just started with New Relic, or you haven't created an alert condition yet, we recommend starting with Alert conditions.

You can create an alert condition from:

You can also use one of our alert builders:

- Use Write your own query to build alerts from scratch.

- Use Guided mode to choose from recommended options and have your NRQL query built for you.

No matter where you begin creating an alert condition, whether through a chart or by writing your own query, NRQL is the building block upon which you can define your signal and set your thresholds.

NRQL alert syntax

Here's the basic syntax for creating all NRQL alert conditions.

SELECT function(attribute)FROM EventWHERE attribute [comparison] [AND|OR ...]Clause | Notes |

|---|---|

Required | Supported functions that return numbers include:

|

Required | Multiple data types can be targeted. Supported data types:

|

| Use the |

| Include an optional Use the Faceted queries can return a maximum of 20000 values for static and anomaly conditions. ImportantIf the query returns more than the maximum number of values, the alert condition can't be created. If you create the condition and the query returns more than this number later, the alert will fail. Modify your query so that it returns a fewer number of values. |

Reformatting incompatible NRQL

Some elements of NRQL used in charts don't make sense in the context of streaming alerts. Here's a list of the most common incompatible elements and suggestions for reformatting a NRQL alert query to achieve the same effect.

Element | Notes |

|---|---|

| Example: NRQL conditions produce a never-ending stream of windowed query results, so the |

| In NRQL queries, the For NRQL conditions and if not using sliding window aggregation, the equivalent property to |

| In NRQL queries, the If you are configuring an NRQL condition with a static threshold, the equivalent property to the |

| The

|

| These functions are not yet supported for NRQL alerting. |

Multiple aggregation functions | Each condition can only target a single aggregated value. To alert on multiple values simultaneously, you'll need to decompose them into individual conditions within the same policy. Original query: Decomposed: |

| The |

| The You can enable sliding windows in the UI. When creating or editing a condition, go to Adjust to signal behavior > Data aggregation settings > Use sliding window aggregation. For example to create an alert condition equivalent to You would use a data aggregation window duration of 5 minutes, with a sliding window aggregation of 1 minute. |

| In NRQL queries, the

|

Subqueries | Subqueries are not compatible with streaming because subquery execution requires multiple passes through data. |

Subquery JOINs | Subquery JOINS are not compatible with streaming alerts because subquery execution requires multiple passes through data. |

NRQL alert threshold examples

Here are some common use cases for NRQL conditions. These queries will work for static and anomaly condition types.

NRQL conditions and query order of operations

By default, the aggregation window duration is 1 minute, but you can change the window to suit your needs. Whatever the aggregation window, New Relic will collect data for that window using the function in the NRQL condition's query. The query is parsed and executed by our systems in the following order:

FROMclause. Which event type needs to be grabbed?WHEREclause. What can be filtered out?SELECTclause. What information needs to be returned from the now-filtered data set?

Example: null value returned

Let's say this is your alert condition query:

SELECT count(*)FROM SyntheticCheckWHERE monitorName = 'My Cool Monitor' AND result = 'FAILED'If there are no failures for the aggregation window:

- The system will execute the

FROMclause by grabbing allSyntheticCheckevents on your account. - Then it will execute the

WHEREclause to filter through those events by looking only for the ones that match the monitor name and result specified. - If there are still events left to scan through after completing the

FROMandWHEREoperations, theSELECTclause will be executed. If there are no remaining events, theSELECTclause will not be executed.

This means that aggregators like count() and uniqueCount() will never return a zero value. When there is a count of 0, the SELECT clause is ignored and no data is returned, resulting in a value of NULL.

Example: zero value returned

If you have a data source delivering legitimate numeric zeroes, the query will return zero values and not null values.

Let's say this is your alert condition query, and that MyCoolEvent is an attribute that can sometimes return a zero value.

SELECT average(MyCoolAttribute)FROM MyCoolEventIf, in the aggregation window being evaluated, there's at least one instance of MyCoolEvent and if the average value of all MyCoolAttribute attributes from that window is equal to zero, then a 0 value will be returned. If there are no MyCoolEvent events during that minute, then a NULL will be returned due to the order of operations.

Example: null vs. zero value returned

To determine how null values will be handled, adjust the loss of signal and gap filling settings in the Alert conditions UI.

You can avoid NULL values with a query order of operations shortcut. To do this, use a filter sub-clause, then include all filter elements within that sub-clause. The main body of the query should include a WHERE clause that defines at least one entity so, for any aggregation window where the monitor performs a check, the signal will be tied to that entity. The SELECT clause will then run and apply the filter elements to the data returned by the main body of the query, which will return a value of 0 if the filter elements result in no matching data.

Here's an example to alert on FAILED results:

SELECT filter(count(*), WHERE result = 'FAILED')FROM SyntheticCheckWHERE monitorName = 'My Favorite Monitor'In this example, a window with a successful result would return a 0, allowing the condition's threshold to resolve on its own.

Important

If no events (lines) are reported, the signal loss cannot be avoided even with the changes mentioned above. We recommend establishing or maintaining a Lost Signal Threshold to trigger an incident if the event stops reporting entirely.

For more information, check out our blog post on troubleshooting for zero versus null values.

Nested aggregation NRQL alerts

Nested aggregation queries are a powerful way to query your data. However, they have a few restrictions that are important to note.

NRQL condition creation tips

Here are some tips for creating and using a NRQL condition:

Topic | Tips |

|---|---|

Condition types | NRQL condition types include static and anomaly. |

Create a description | For NRQL conditions, you can create a custom description to add to each incident. Descriptions can be enhanced with variable substitution based on metadata in the specific incident. |

Query results | Queries must return a number. The condition evaluates the returned number against the thresholds you've set. |

Time period | NRQL conditions evaluate data based on how it's aggregated, using aggregation windows from 30 seconds to 120 minutes, in increments of 15 seconds. For best results, we recommend using the event flow or event timer aggregation methods. For the cadence aggregation method, the implicit

|

Lost signal threshold (loss of signal detection) | You can use loss of signal detection to alert on when your data (a telemetry signal) should be considered lost. A signal loss can indicate that a service or entity is no longer online or that a periodic job failed to run. You can also use this to make sure that incidents for sporadic data, such as error counts, are closed when no signal is coming in. |

Advanced signal settings | These settings give you options for better handling continuous, streaming data signals that may sometimes be missing. These settings include the aggregation window duration, the delay/timer, and an option for filling data gaps. For more on using these, see Advanced signal settings. |

Condition settings | Use the Condition settings to:

|

Limits on conditions | See the maximum values. |

Health status | In order for a NRQL alert condition health status display to function properly, the query must be scoped to a single entity. To do this, either use a |

Examples | For more information, see: |

Managing tags on conditions

When you edit an existing NRQL condition, you have the option to add or remove tags associated with the condition entity. To do this, click the Manage tags button below the condition name. In the menu that pops up, add or delete a tag.

Condition edits can reset condition evaluation

When you edit NRQL alert conditions in some specific ways (detailed below), their evaluations are reset, meaning that any evaluation up until that point is lost, and the evaluation starts over from that point. The two ways this will affect you are:

- For "for at least x minutes" thresholds: because the evaluation window has been reset, there will be a delay of at least x minutes before any incidents can be reported.

- For anomaly conditions: the condition starts over again and all anomaly learning is lost.

The following actions cause an evaluation reset for NRQL conditions:

- Changing the query

- Changing the aggregation window, aggregation method, or aggregation delay/timer setting

- Changing the "close incidents on signal loss" setting

- Changing any gap fill settings

- Changing the anomaly direction (if applicable)- higher, lower, or higher/lower

- Change the threshold value, threshold window, or threshold operator

- Change the slide-by interval (on sliding windows aggregation conditions only)

The following actions (along with any other actions not covered in the above list) will not reset the evaluation:

- Changing the loss of signal time window (expiration duration)

- Changing the time function (switching "for at least" to "at least once in," or vice-versa)

- Toggling the "open incident on signal loss" setting

Alert condition types

When you create a NRQL alert, you can choose from different types of conditions:

NRQL alert condition types | Description |

|---|---|

Static | This is the simplest type of NRQL condition. It allows you to create a condition based on a NRQL query that returns a numeric value. Optional: Include a |

Anomaly (Dynamic anomaly) | Uses a self-adjusting condition based on the past behavior of the monitored values. Uses the same NRQL query form as the static type,

including the optional |

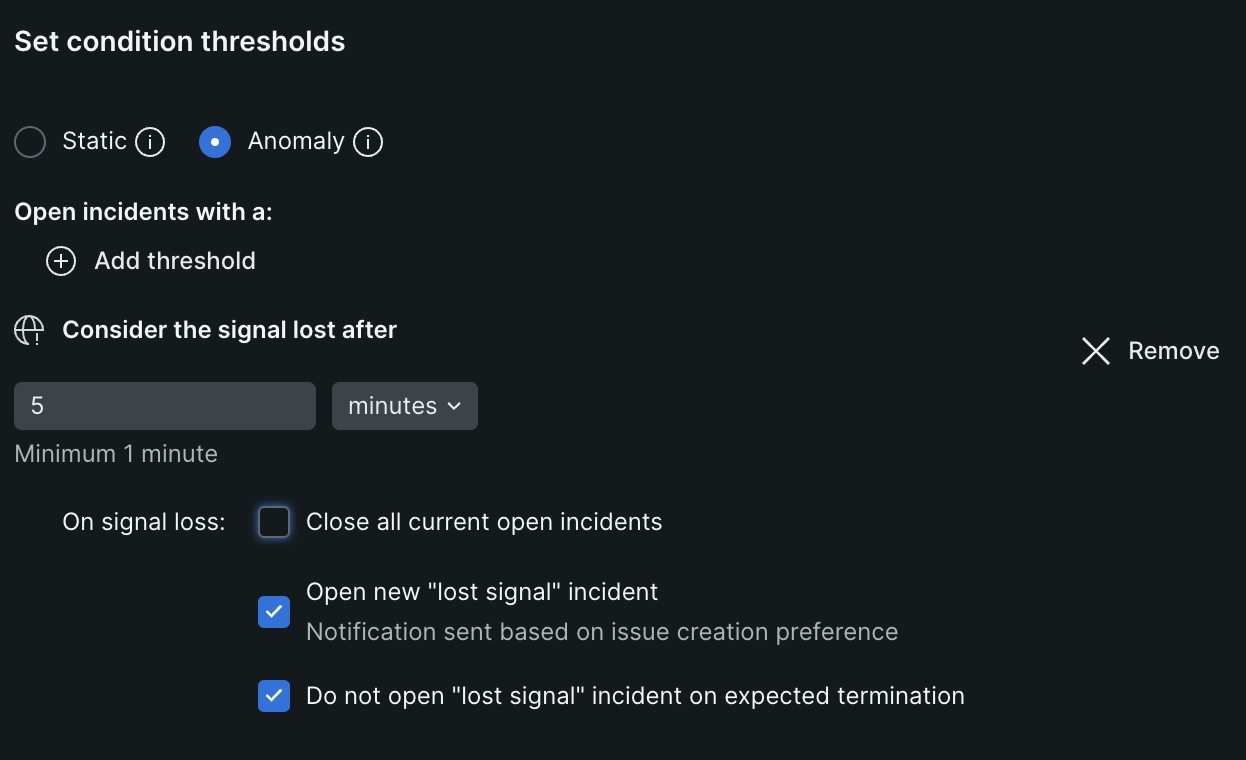

Set the loss of signal threshold

Important

The loss of signal feature requires a signal to be present before it can detect that the signal is lost. If you enable a condition while a signal is not present, no loss of signal will be detected and the loss of signal feature will not activate.

Loss of signal occurs when no data matches the NRQL condition over a specific period of time. You can set your loss of signal threshold duration and also what happens when the threshold is crossed.

Go to one.newrelic.com > All capabilities > Alerts > Alert conditions (Policies), then + New alert condition. Loss of signal is only available for NRQL conditions.

You may also manage these settings using the GraphQL API (recommended), or the REST API. Go here for specific GraphQL API examples.

Loss of signal settings:

Loss of signal settings include a time duration and a few actions.

Signal loss expiration time

- UI label: Signal is lost after:

- GraphQL Node: expiration.expirationDuration

- Expiration duration is a timer that starts and resets when we receive a data point in the streaming alerts pipeline. If we don't receive another data point before your 'expiration time' expires, we consider that signal to be lost. This can be because no data is being sent to New Relic or the

WHEREclause of your NRQL query is filtering that data out before it is streamed to the alerts pipeline. Note that when you have a faceted query, each facet is a signal. So if any one of those signals ends during the duration specified, that will be considered a loss of signal. - The loss of signal expiration time is independent of the threshold duration and triggers as soon as the timer expires.

- The maximum expiration duration is 48 hours. This is helpful when monitoring for the execution of infrequent jobs. The minimum is 30 seconds, but we recommend using at least 3-5 minutes.

Loss of signal actions

Once a signal is considered lost, you have a few options:- Close all current open incidents: This closes all open incidents that are related to a specific signal. It won't necessarily close all incidents for a condition. If you're alerting on an ephemeral service, or on a sporadic signal, you'll want to choose this action to ensure that incidents are closed properly. The GraphQL node name for this is

closeViolationsOnExpiration. - Open new incidents: This will open a new incident when the signal is considered lost. These incidents will indicate that they are due to a loss of signal. Based on your incident preferences, this should trigger a notification. The graphQL node name for this is

openViolationOnExpiration. - When you enable both of the above actions, we'll close all open incidents first, and then open a new incident for loss of signal.

- Do not open "lost signal" incidents on expected termination. When a signal is expected to terminate, you can choose not to open a new incident. This is useful when you know that a signal will be lost at a certain time, and you don't want to open a new incident for that signal loss. The GraphQL node name for this is

ignoreOnExpectedTermination.

- Close all current open incidents: This closes all open incidents that are related to a specific signal. It won't necessarily close all incidents for a condition. If you're alerting on an ephemeral service, or on a sporadic signal, you'll want to choose this action to ensure that incidents are closed properly. The GraphQL node name for this is

Important

To prevent a loss of signal incident from opening when Do not open "lost signal" incident on expected termination, you must add the tag termination: expected to the entity. This tag tells us that we expected the signal to end. See how to add the tag directly to the entity. Note that the tag hostStatus: shutdown will also prevent a prevent a "loss of signal" incident from opening. For more information, see Create a "host not reporting" condition.

To create a NRQL alert configured with loss of signal detection in the UI:

Follow the instructions to create a NRQL alert condition.

On the Set thresholds step you'll find the option to Add lost signal threshold. Click this button.

Set the signal expiration duration time in minutes or seconds in the Consider the signal lost after field.

Choose what you want to happen when the signal is lost. You can check any or all of the following options: Close all current open incidents, Open new "lost signal" incident, Do not open "lost signal" incident on expected termination. These control how loss of signal incidents will be handled for the condition.

You can optionally add or remove static/anomaly numeric thresholds. A condition that has only a loss of signal threshold and no static/anomaly numeric thresholds is valid, and it's considered a "stand alone" loss of signal condition.

Caution

When creating a stand alone loss of signal condition, consider the query used. Using complex queries could cost more than what's necessary to monitor a signal.Continue through the steps to save your condition.

If you selected Do not open "lost signal" incident on expected termination, you must add the

termination: expectedtag to the entity to prevent a loss of signal incident from opening. See how to add the tag directly to the entity.

Tip

You might be curious why you'd ever want to have both Open new "lost signal" incident and Do not open "lost signal" incident on expected termination set to true. Think of it like this: you want to get a notification when you lose a signal. Except, you don't want a notification for the one time you know the signal will stop because you scheduled it. In that case, you'd set both to true, and when you expect the signal to be lost, you'd add the termination: expected tag to the relevant entity.

Incidents open due to loss of signal close when:

- the signal comes back. Newly opened lost signal incidents will close immediately when new data is evaluated.

- the condition they belong to expires. By default, conditions expire after 3 days.

- you manually close the incident with the Close all current open incidents option.

Tip

Loss of signal detection doesn't work on NRQL queries that use nested aggregation or sub-queries.

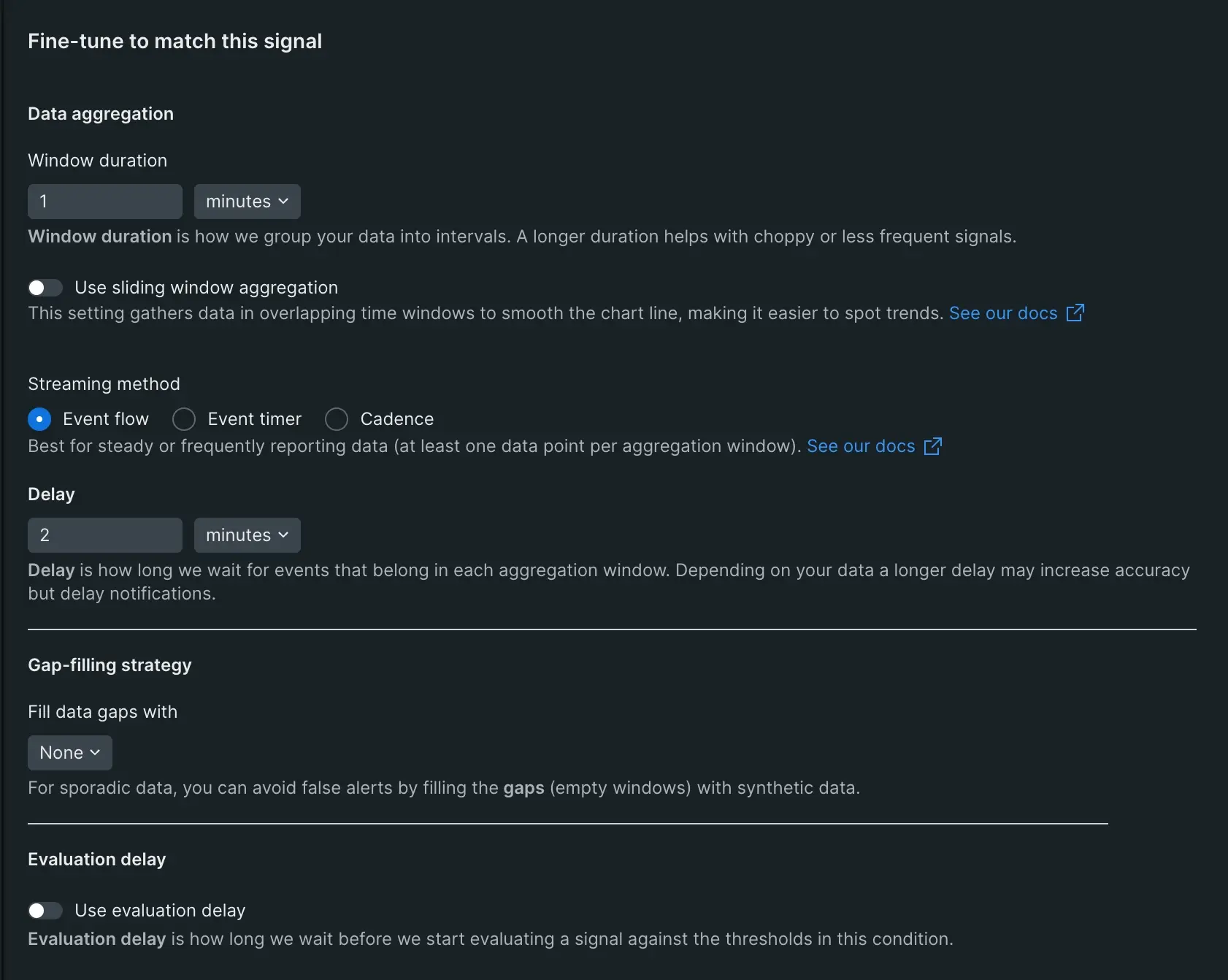

Advanced signal settings

When creating a NRQL alert condition, use the advanced signal settings to control streaming alert data and avoid false alarms.

When creating a NRQL condition, there are several advanced signal settings:

- Aggregation window duration

- Sliding window aggregation

- Streaming method

- Delay/timer

- Fill data gaps

- Evaluation delay

To read an explanation of what these settings are and how they relate to each other, see Streaming alerts concepts. Below are instructions and tips on how to configure them.

Aggregation window duration

You can set the aggregation window duration to choose how long data is accumulated in a streaming time window before it's aggregated. You can set it to anything between 30 seconds and 120 minutes. The default is one minute.

Sliding window aggregation

You can use sliding windows to create smoother charts. This is done by creating overlapping windows of data.

Learn how to set sliding windows in this short video (2:30 minutes):

Once enabled, set the "slide by interval" to control how much overlap time your aggregated windows have. The interval must be shorter than the aggregation window while also dividing evenly into it.

Important

Immediately after you create a new sliding windows alert condition or perform any action that can cause an evaluation reset, your condition will need time build up an "aggregated buffer" for the duration of the first aggregation window. During that time, no incidents will trigger. Once that single aggregation window has passed, a complete "buffer" will have been built and the condition will function normally.

Streaming method

Choose between three streaming aggregation methods to get the best evaluation results for your conditions.

Delay/timer

You can adjust the delay/timer to coordinate our streaming alerting algorithm with your data's behavior. If your data is sparse or inconsistent, you may want to use the event timer aggregation method.

For the cadence method, the total supported latency is the sum of the aggregation window duration and the delay.

If the data type comes from an APM language agent and is aggregated from many app instances (for example, Transaction, TransactionError, etc.), we recommend using the event flow method with the default settings.

Important

When creating NRQL conditions for data collected from Infrastructure Cloud Integrations such as AWS CloudWatch or Azure, we recommend that you use the event timer method.

Fill data gaps

Gap filling lets you customize the values to use when your signals don't have any data. You can fill gaps in your data streams with one of these settings:

- None: (Default) Choose this if you don't want to take any action on empty aggregation windows. On evaluation, an empty aggregation window will reset the threshold duration timer. For example, if a condition says that all aggregation windows must have data points above the threshold for 5 minutes, and 1 of the 5 aggregation windows is empty, then the condition won't be an incident.

- Custom static value: Choose this if you'd like to insert a custom static value into the empty aggregation windows before they're evaluated. This option has an additional, required parameter of

fillValue(as named in the API) that specifies what static value should be used. This defaults to0. - Last known value: This option inserts the last seen value before evaluation occurs. We maintain the state of the last seen value for a minimum of 2 hours. If the configured threshold duration is longer than 2 hours, this value is kept for that duration instead.

Tip

The alerts system fills gaps in actively reported signals. This signal history is dropped after a period of inactivity and, for gap filling, data points received after this period of inactivity are treated as new signals. The inactivity length is either 2 hours or the configured threshold duration, whichever is longer.

To learn more about signal loss, gap filling, and how to request access to these features, see this Support Forum post.

Options for editing data gap settings:

- In the NRQL conditions UI, go to Condition settings > Advanced signal settings > fill data gaps with and choose an option.

- If using our Nerdgraph API (preferred), this node is located at:

actor : account : alerts : nrqlCondition : signal : fillOption | fillValue - NerdGraph is our recommended API for this but if you're using our REST API, you can find this setting in the REST API explorer under the "signal" section of the Alert NRQL conditions API.

Evaluation delay

You can enable the Use evaluation delay flag and set up to 120 minutes to delay the evaluation of incoming signals.

When new entities are first deployed, resource utilization on the entity is often unusually high. In autoscale environments this can easily create a lot of false alerts. By delaying the start of alert detection on signals emitted from new entities you can significantly reduce the number of false alarms associated with deployments in orchestrated or autoscale environments.

Options to enable evaluation delay:

- In the NRQL conditions UI, go to Adjust to signal behavior > Use evaluation delay.

- If using our Nerdgraph API, this node is located at:

actor : account : alerts : nrqlCondition : signal : evaluationDelay

HNR NRQL conditions in guided mode

NRQL condition guided mode offers a curated experience for creating infrastructure "host not reporting" (HNR) NRQL conditions. This is the preferred to the alternative to creating infrastructure "host no reporting" conditions.