With alerts' correlation logic, related issues are grouped together to reduce distracting and redundant alerts. As events come into your system they are eligible for our correlation logic. Eligible issues are evaluated based on time, alert context, and relationship data. If multiple issues are related then our correlation logic will funnel the related alert events into a single, comprehensive issue.

We call this correlation logic decisions. We have built-in decisions but you can also create and customize your own on the decisions page. To find the decisions page go to one.newrelic.com > All capabilities > Alerts > Decisions. The more you configure your decisions to best suit your needs, the better New Relic can correlate your alert events, reduce noise, and provide increased context for on-call teams.

What is correlation and how does it work?

Your most recent and active alert events are available for our correlation logic. For example, let's say your system has received two alerts saying a synthetic monitor is failing in Australia and London. These two alerts will have created their own unique alert events. Those alert events will generate their own unique issues based on your teams existing alert event creation policy. The correlation logic of New Relic will then test those alert events against each other to find similarities. In this case, it's the same monitor that is failing across multiple locations, so New Relic will merge both alert events into a single issue that contains each relevant event.

When we correlate events, we check every pair of combinations against each other and combine as many as possible. For example:

- Our algorithm correlates alert event A and B (call it "AB").

- Our algorithm correlates alert event B and C (call it "BC").

- Because B is present in both issues, the algorithm then correlates all three alert events together into one issue.

Configure correlation policy

To enable correlation on alert-based issues, you'll need to connect to correlation for the respective alert policy.

Check the box Correlate and suppress noise to enable correlation for the alert policy.

Decision types

Decisions determine how alert event intelligence correlates issues together. The correlation logic of New Relic is available to your team in three different decision types:

- Global decision: A broad set of default decisions are automatically enabled when you start using alerts.

- Suggested decision: New Relic's correlation engine constantly evaluates your event data to suggest decisions that capture correlation patterns to reduce noise. You can preview simulation results of a suggested decision and choose to activate.

- Custom decision: Your team can customize decisions based on your use case to enhance correlation effectiveness. The decision UI of New Relic gives you flexibility to configure all dimensions in a decision.

Review your active decisions

To review your teams existing decisions:

- Go to one.newrelic.com> Alerts > alert event intelligence > Decisions.

- Review the list of active decisions. To see the rule logic that creates correlations between your issues, click the decision.

- To see examples of alert events the decision correlated, click the Recent correlations tab.

- You have the option to enable or disable these global decisions.

Configure sources

Before configuring your decisions, it's important to determine the sources you would like to correlate. Sources are your data inputs.

You can get data from any of the following sources:

Global decisions

Global decisions are automatically enabled when your team starts using alerts. They require no configuration and are immediately available for your team. Global decisions cover a variety of correlation scenarios.

The table below provides descriptions for all of the global decisions that are automatically enabled.

Decision name | Description |

|---|---|

Same New Relic Target Name (NRQL) | Correlation is activated when the entity name with an exceeded threshold and NRQL query are the same. Relevant events from the same NRQL alert condition will be identified. This decision helps relate issues that have the same transaction query latency deviation for example. |

Same New Relic Target Name (Non-NRQL) | Correlation is activated because the New Relic non-NRQL alert thresholds are the same. Does not apply to REST source. Non-NRQL entity refers to entity, typically APPLICATION, HOST types, see New Relic GitHub repo on entity synthesis. With this decision, relevant issues from the same entity will be identified. For example, host high memory issue and host not-reporting issue could be highly possible due to the same cause. |

Same New Relic Target ID | Correlation is activated because the New Relic non-NRQL alert thresholds are the same. Does not apply to REST source. Use entity ID to uniquely identify an entity instance, learn more about entity.guid. |

Same New Relic Condition | Correlation is activated because the New Relic condition IDs are the same. For example, cpu usage increase with related services will trigger alert events from the same cpu usage condition, and thus be identified. This logic is valuable beyond alert policy issue creation preference option for one issue per condition, due to condition-level granularity and flexibity in defining correlation time window. |

Same New Relic Condition and Deep Link Url | Correlation is activated because the New Relic condition IDs and deep link url are the same. Deep link url provides time series and time range information in addition to alert condition. Correlating these issues make it easier for you to look at related alert events in the alert event response flow with time-scoped metrics, and perform deep analysis. Deep link url can be automatically generated if alert events are triggered by New Relic alert conditions, while for REST source deepLinkUrl should be user defined. |

Same New Relic Condition and Title | Correlation is activated because the New Relic condition names and titles are the same. This is a refined option by comparing titles in addition to conditions to reveal tighter relevance with the same alert message. |

Same k8s Deployment | Correlation logic is activated because the kubernetes deployments are the same. Many alert events are from single deployment changes. This decision is to reduce issues from the same troublesome Kubernetes entity deployment. |

Same Application Name, Policy and Id | Correlation logic is activated because custom application name, policy and custom ID are the same. We correlate issues with these elements to reduce application issues, particularly cater to custom tag users. Learn more about tags. Custom tag ID could be defined by condition family ID or other ID values used as a key to identify connections between data. |

Similar Alert Message | Correlation is activated because alert events have similar titles, and are from the same entity. This is to reduce issues from the same entity that are caused by similar alert conditions. |

Same Secure Credential, Public Location and Type | Correlation is activated because the secure credential, public location and custom type are the same respectively. This is to correlate issues from the same geo location/region with the same security credentials that are normally triggered by a single root cause (for example, synthetics monitor failure), and could highly probable be addressed with the same solution. Add tags to benefit from this decision. |

Similar Issue Structure | Correlation is activated because both alert events have similar attributes structure and data contents. This is a simpler version of clustering, it adopts advanced similarity algorithms in matrix computation to reduce highly related issues. |

Topologically Dependent | Correlation is activated because alert events are generated from instances that have dependent relationships. Learn more about topology correlation out-of-the-box. |

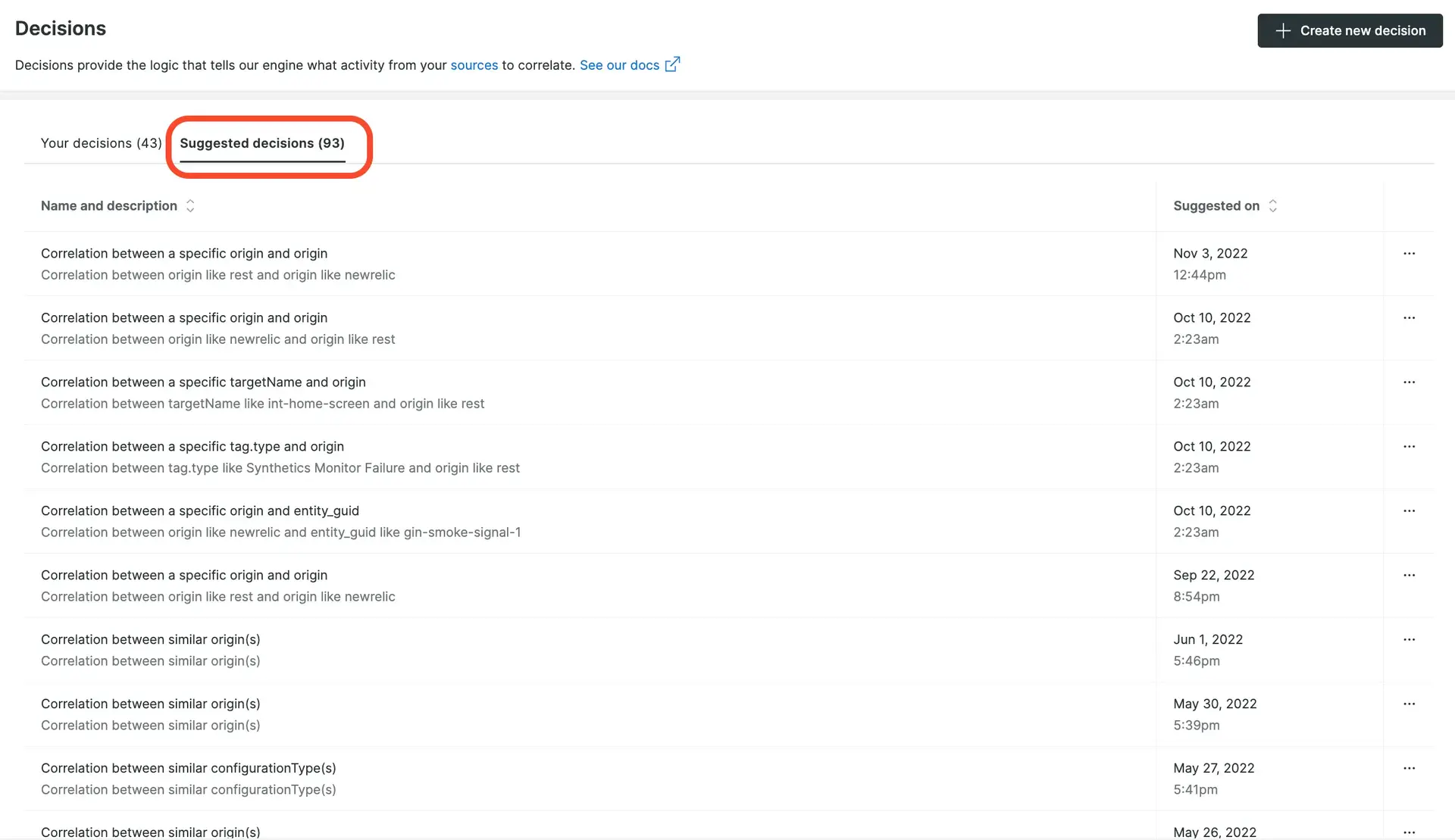

Use suggested decisions

The data from your selected sources is continuously inspected for patterns to help reduce noise. Once patterns have been observed in your data, our correlation logic will suggest unique decisions that would allow these types of events to correlate in the future.

To get started, click Suggested decisions tab on the topic of Decisions UI page. You can see the logic behind the suggested decision, and the estimated correlation rate by clicking each suggested decision.

one.newrelic.com > All capabilities > Alerts > Decisions: Some example statistics from the decisions UI.

To enable a suggested decision, click Add to your decisions. Once activated, the decision will appear in your teams main decision table. All suggested decisions will show the creator as New Relic AI (this refers to New Relic alerts).

If the suggested decision isn't relevant to your needs, click Dismiss.

Create custom decisions

You can reduce noise and improve correlation by building your own custom decisions. To start building a decision, go to one.newrelic.com > All capabilities > Alerts > Decisions, then click Create new decision.

There are two versions of the decision builder:

- Basic decision builder (in preview)

- Advanced decision builder

For more on how to use these decision builders, keep reading.

Decision elements

A decision is composed of these elements:

- Correlate by attributes: Correlate all alert events by similarities or differences in their attributes.

- Filter by specific values: Narrow down the alert events to those with specific values.

- Filter by related entities: Select the kinds of shared connections or dependencies you want us to look for.

- Correlation time range: Sets the maximum allowed time difference between the creation times of two alert events for them to be considered for correlation.

Once the connections between alert events is set up, our algorithm groups correlated alert events into a single issue.

Basic decision builder

This feature is currently in preview and available for only some customers. If you don't have access, see the instructions for the advanced decision builder.

Here's a short video (3:25 minutes) showing how to use the basic decision builder:

The basic decision builder covers the majority of use cases and focuses on "correlate by attribute," where you can specify filter conditions for correlation matches. You can also apply the same filter logic for specific values to both alert events being correlated. For example, you can correlate alert events if the entity name is host 1 for both.

To create your own custom decision using the basic decision builder complete the following steps. Keep in mind that steps 1, 2, and 3 are optional on their own, but at least one of the three must be defined in order to create a decision.

Step 1: Correlate by attributes

Choose an attribute from the dropdown menu. The equal operator, the most popular option, is preselected, or you can choose another operator.

The second attribute usually matches the first, so it's autopopulated. You can keep the autopopulated option or choose another operator.

Once you're done, a simulation runs automatically.

You can repeat these steps to add up to eight logic filters.

Step 2: Filter by specific values

- To open the

Filter by specific valuessection and see additional filters, click See more options. - Choose an attribute.

- The

equaloperator is preselected, or you can select another operator. - Select expected values for the chosen attributes, with multiple selections supported.

When complete, the simulation will run automatically.

You can repeat these steps to add up to eight logic filters.

Step 3: Filter by related entities

Click Filter by related entities and choose the entity classes.

When your data is collected by New Relic agents, you get automatic topology correlation. Learn more about our default topology correlation.

You can also set up topology settings using our NerdGraph API. This allows any topology-related decision to be matched with your topology data. Learn more about setting up topology correlation.

Step 4: Set correlation time range

This sets the maximum allowed time difference between the creation times of two alert events for them to be considered for correlation. alert events within this range will be assessed based on specified rules, while those outside the range won't be correlated.

The time range is set to 20 minutes by default. You can adjust it between 1-120 minutes.

Step 5: Testing your decision using a simulation

After adding filter logic, the system automatically runs a simulation using the past 7 days of alert event data to help you validate the decision before applying it.

You can also manually trigger the simulation by clicking Simulate, which you may want to do if something is changed in the decision.

Step 6: Name and save your decision

To access the name and description panel, click Create decision. The system generates a name based on your decision. Customize the name and description as desired.

Advanced decision builder

The advanced decision builder allows for more complex decision creation by applying different logic filters to the two alert events being correlated. For example, you can correlate alert events if one has entity name host 1 and the other has entity name host 2. There are also more advanced settings besides being only able to configure the time window.

To use the advanced decision builder:

- Go to one.newrelic.com > All capabilities > Alerts > Decisions.

- Click Create new decision, and then click Use advanced builder.

For details on the available options, keep reading.

Important terms:

- Logic filter: Logic condition defined with an operator on an attribute.

- Segment: A group of alert events that satisfy a combination of logic filters.

To create your own custom decision complete the following steps. Keep in mind that steps 1, 2, and 3 are optional on their own, but at least one of the three must be defined in order to create a decision.

Step 1: Filter your data

Correlation occurs between any two alert events. If no filters are defined then all incoming alert events will be considered by the decision. The more you configure your decisions to suit your needs, the better we can correlate your alert events, reduce noise, and provide increased context for on-call teams.

Your team can define your filters for the first segment of alert events, and the second segment of alert events. Filter operators range from substring matching to regex matching to help you target the alert events you want and exclude those you don't.

Step 2: Correlate by attributes

Once you've filtered your data, define the logic used when comparing the alert events' context. You can correlate events based on the following methods:

- Attribute value comparisons with standard operators

- Attribute value similarity using similarity algorithms

- Attribute value regex with capture groups

- Entire alert event comparisons using similarity or clustering algorithms

Step 3: Correlate by related entities

For automatic topology correlation, make sure your telemetry data is collected by New Relic agents. Learn more about topology correlation out-of-the-box.

You can also set up topology settings using our NerdGraph API. This allows any topology-related decision to be matched with your topology data. Learn more about setting up topology correlation.

Step 4: Give it a name

After you configure your decision logic, give it a recognizable name, and description.

Tip

Minimize security concerns by ensuring you don't add sensitive or personal information to these open text fields.

This is used in notifications and other areas of the UI to indicate which decision caused a pair of alert events to be correlated together. If you don't want to update default advanced settings in the next step, click Create decision to finish the creation.

Step 5: Use advanced settings

Use the advanced settings area to further customize how your decision behaves when correlating events. Each setting has a default value so customization is optional.

- Time window: Sets the maximum time between two alert events created time for them to be eligible for correlation.

- Issue priority: Overrides the default priority setting (

inherit priority) to add higher or lower priority if the alert events are correlated. - Frequency: Modifies the minimum number of alert events that need to meet the decision logic for the decision to trigger.

- Similarity: If you're using

similar tooperators in your decision logic, you can choose from a list of algorithms and set its sensitivity. This will apply to allsimilar tooperators in your decision.

Logic operators

Decision provides a set of operators to help you flexibly define how an alert event's attribute value evaluates in a logic filter. The basic ones are equals, contains, starts with, ends with, exists, and their negate operators accordingly. For example, does not equal.

There is a similarity operator is similar to, the underlying similarity algorithm can be specified for this operator. By default, it uses Levenshtein Distance.

The contains (regex) operator allows define regex condition. Powerful to match arbitrary data values.

Similarity algorithms

Here are technical details on the similarity algorithms we use:

Regex operators

When building a decision, available operators include:

contains (regex): used in Step 1: Filter your data.regular expression match: used in Step 2: Contextual correlation.

The decision builder follows the standards outlined in these documents for regular expressions.

Correlation assistant

You can use the correlation assistant to more quickly analyze alert events, create decision logic, and test the logic with a simulation. To use the correlation assistant:

- Go to one.newrelic.com > All capabilities > Alerts > Issues & activity > Alert events tab.

- Check the boxes of alert events you'd like to correlate. Then, at the bottom of the alert event list, click Correlate alert events.

- For best results for correlating alert events, select common attributes with a low frequency percentage. Learn more about using frequency.

- Click Simulate to see the likely effect of your new decision on the last week of your data.

- Click on examples of correlation pairs to determine which correlations to use.

- If you like what's been simulated, click Next, and then name and describe your decision.

- If the simulation result shows too many potential alert events, you may want to choose a different set of attributes and alert events for your decision, and run another simulation. Learn more about simulation.

Simulation vs real-time correlation

It's important to understand the difference between simulation and real-time correlation in decisions:

Simulation: Simulation correlation involves analyzing two separate alert events to understand their relationship under simulated conditions. These alert events can originate from either the same underlying issue or from different issues. The focus is on determining potential causative factors or shared characteristics between individual alert events. Simulation helps you test and validate your correlation logic against historical data before applying it in real-time.

Real-time correlation (decisions): In contrast, real-time correlation targets distinct issues, with each issue potentially encompassing multiple alert events. The aim is to detect and connect patterns across these multiple alert events to identify underlying issues for more efficient correlation. Real-time correlation leverages live data streams, allowing for prompt identification and response to emerging problems.

Using simulation

Simulation tests your correlation logic by analyzing two separate alert events from the last week of your data, showing you how many correlations would have happened. This allows you to validate your decision logic before it's applied to real-time correlation of issues. Here's a breakdown of the decision preview information displayed when you simulate:

- Potential correlation rate: The percentage of tested alert events this decision would have affected.

- Total created alert events: The number of alert events tested by this decision.

- Total estimated correlated alert events: The estimated number of alert events this decision would have correlated.

- Alert event examples: A list of alert event pairs that the decision would have correlated, including the rule's attributes and values, as well as other popular attributes in each pair. Click on alert events to view details.

Run the simulation with different attributes as many times as you need until you see results you like. When you're ready, follow the UI prompts to save your decision.

Topology correlation

For New Relic alerts, topology is a representation of your service map: how the services and resources in your infrastructure relate to one another.

For decisions users, a default topology decision is added and enabled in your account. You also have the option to create custom decisions.

Our topology correlation finds relationships between alert event sources to determine if alert events and thus their respective issues should correlate. Topology correlation is designed to improve the quality of your correlations and the speed at which they're found.

Requirements

For automatic topology correlation (without the need to explicitly set up topology graph), make sure your telemetry data is collected by New Relic agents. The more types of New Relic agents are installed in your services and environment, the more opportunities for topology decisions to correlate your alert events.

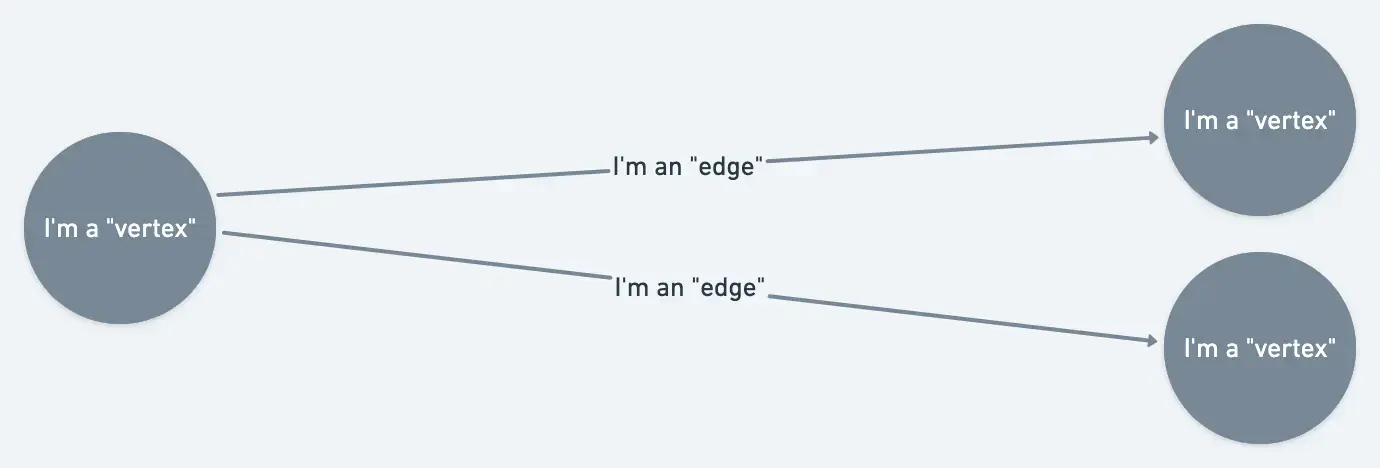

How does topology correlation work?

In this service map, the hosts and apps are the vertices, and the lines showing their relationships are the edges.

To set up your topology in addition to the entities and relationships collected by New Relic agents, use our NerdGraph API.

Customized topology correlation relies on two main concepts:

- Vertex: A vertex represents a monitored entity. It's the source from which your alert events are coming from, or describing a problematic symptom about. A vertex has attributes (key/value pairs) configured for it, like entity GUIDs or other IDs, which allow it be associated with incoming alert events.

- Edges: An edge is a connection between two vertices. Edges describe the relationship between vertices.

It may help to understand how topology is used to correlate alert events:

First, New Relic gathers all relevant alert events. This includes alert events where decision logic steps 1 and 2 are true and that are also within the defined time window in advanced settings.

Next, we attempt to associate each alert event to a vertex in your topology graph, using a vertex's defining attributes and the available attributes on the alert event.

An example of the steps for associating alert events with the information in the topology graph.

Then, the pairs of vertices which were associated with alert events are tested using the "topologically dependent" operator to determine if these vertices are connected to each other.

This operator checks to see if there is any path in the graph that connect the two vertices within five hops.

The alert events are then correlated and the issues are merged together.

Add attributes to alert events

Alert events are connected to vertices using a vertex's defining attributes. (In the example topology under Topology explained, each vertex has a defining attribute "CID" with a unique value.) Next, New Relic's alerts system finds a vertex that matches the attribute.

If the defining attribute you'd like to use on your vertices isn't already on your alert events, use either of these options to add it:

Create or view topology

To set up your topology or view existing topology, see the NerdGraph topology tutorial.