Install the Python agent

Do you want to monitor the performance of your Python application? You can do this by installing our Python agent! It is a powerful tool that collects telemetry about your application and then forwards that data to New Relic. Once the data is in New Relic, you can view a wide range of UI pages that give you insights into what's happening with your software.

Use the New Relic Python agent to solve your app's performance issues with our My app is slow tutorial.

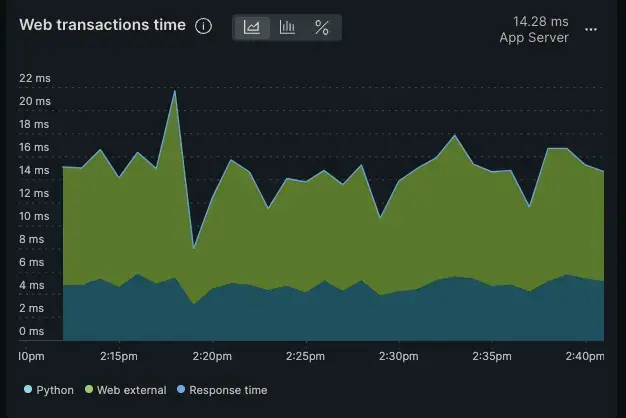

This is an example of the type of chart you would see in the New Relic UI if you installed the Python agent

Start our interactive instructions

Tip

Is your application running in a Kubernetes cluster? Try out our installation method using the Kubernetes APM auto-attach.

This page is interactive, which means we'll ask you some questions about your environment so we can give you specific steps to install the Python agent. Here's what we want to know:

- Do you have a web app or a non-web app?

- If it's a web-app, is it on Docker?

- Which Python framework are you using?

- Which Python server are you using?

After you make your selections in the form below, you'll get customized steps for your environment. If you change your mind after making some selections, click Reset the form to start again:

Did this doc help with your installation?

What's next?

Now that you've installed the Python agent, learn how to solve perfomance issues with our tutorial series.