경보 조건은 모니터링 중인 항목에서 발생했거나 발생하지 못한 항목에 대해 알림을 받고자 할 때 설명하기 위한 정교한 도구 세트를 제공합니다. 최상의 결과를 얻으려면 데이터가 도착하는 방식과 가장 일치하는 집계 방법을 선택하십시오.

세 가지 집계 방법은 이벤트 흐름, 이벤트 타이머 및 케이던스입니다. 개념적 개요에 관심이 있는 경우 스트리밍 알림, 주요 용어 및 개념 에 대한 문서를 참조하세요.

집계란?

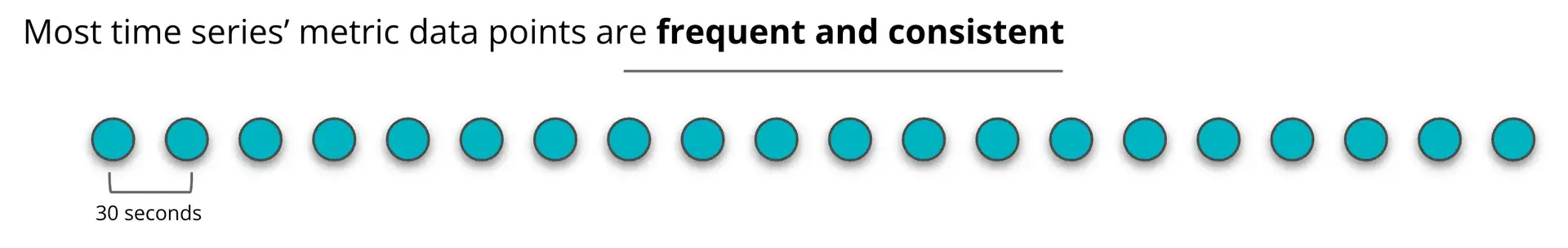

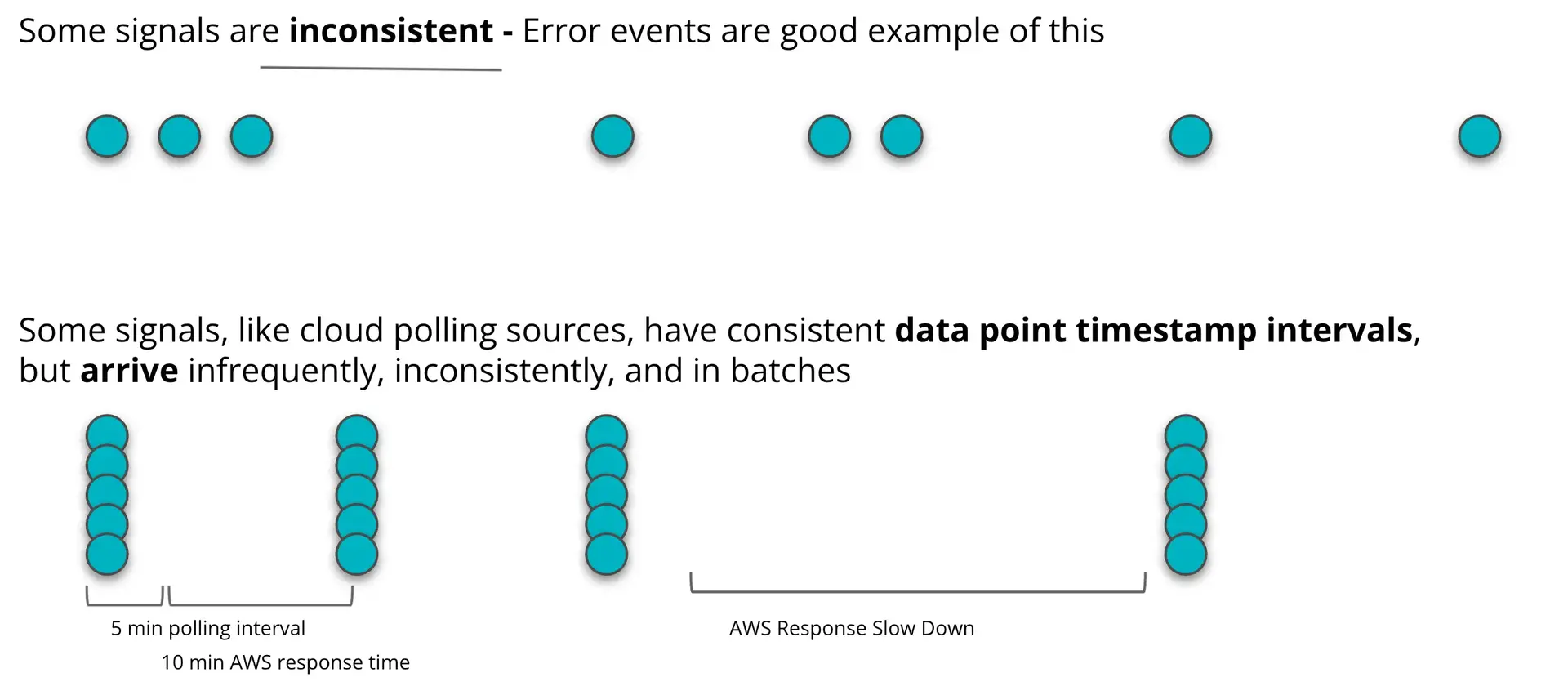

New Relic이 애플리케이션이나 서비스를 모니터링할 때 데이터는 다양한 방식으로 도착할 수 있습니다. 일부 데이터는 일관되고 예측 가능하게 도착하는 반면, 다른 데이터는 일관되지 않고 산발적으로 도착합니다.

집계는 경고 또는 임계 임계값 초과에 대해 분석하기 전에 경고 시스템이 데이터를 수집하는 방법입니다.

데이터는 집계 창에서 데이터 포인트로 수집된 다음 단일 숫자 값으로 변환됩니다. 데이터 포인트는 합계, 평균, 최소 및 최대와 같은 방법을 사용하여 NRQL 쿼리를 기반으로 집계됩니다. 이 단일 숫자 값은 조건의 임계값을 평가하는 데 사용됩니다.

데이터가 집계되면 더 이상 데이터 요소를 추가할 수 없습니다. 당사의 다양한 집계 방법은 데이터를 신속하게 집계하는 것과 충분한 데이터 포인트가 도착하기를 기다리는 것 사이에서 균형을 유지하는 데 도움이 됩니다.

중요한 이유

올바른 집계 방법을 사용하면 관심 있는 알림을 받을 가능성이 더 높고 그렇지 않은 알림은 방지할 수 있습니다.

집계 방법을 결정할 때 고려해야 할 가장 중요한 질문: 내 데이터는 얼마나 자주 도착합니까? 내 데이터가 얼마나 일관되게 도착합니까?

데이터가 선형 방식으로 자주 지속적으로 도착하는 경우 이벤트 흐름을 사용하는 것이 좋습니다.

데이터가 산발적으로, 일관성 없이, 순서에 맞지 않게 도착하는 경우 이벤트 타이머 집계를 사용하는 것이 좋습니다.

이벤트 흐름을 사용하는 경우

이벤트 흐름을 사용하면 데이터 포인트 타임스탬프를 기반으로 데이터가 집계되므로 데이터 포인트가 일관되고 선형적인 방식으로 도착하는 것이 중요합니다. 이 집계 방법은 순서가 맞지 않거나 짧은 기간 내에 광범위한 시간에 도착하는 데이터 포인트 타임스탬프에는 잘 작동하지 않습니다.

이벤트 흐름은 가장 일반적인 사용 사례에 적용되기 때문에 기본 집계 방법입니다.

이벤트 흐름 작동 방식

이벤트 흐름은 데이터 포인트 타임스탬프를 사용하여 집계 창을 열고 닫을 시기를 결정합니다.

예를 들어 지연 기간이 2분인 이벤트 흐름을 사용하는 경우 수신된 마지막 타임스탬프보다 2분 늦은 타임스탬프가 도착하면 집계 기간이 닫힙니다.

12:00pm 타임스탬프가 있는 데이터 포인트가 도착합니다. 집계 창이 열립니다. 어떤 시점에서 12:03pm 데이터 포인트가 도착합니다. 이벤트 흐름은 12:03 데이터 포인트를 제외하고 창을 닫고 임계값에 대해 닫힌 창을 평가합니다.

이벤트 흐름 집계 창은 나중 타임스탬프까지 데이터 포인트를 계속 수집합니다. 나중 타임스탬프는 데이터 포인트 자체가 아니라 시스템을 앞으로 나아가게 하는 것입니다. 이벤트 흐름은 데이터를 집계하기 전에 지연 설정보다 늦은 다음 데이터 포인트가 도착할 때까지 필요한 만큼 기다립니다.

이벤트 흐름은 자주 지속적으로 도착하는 데이터에 가장 적합합니다.

주의

데이터 포인트가 65분 이상 간격으로 도착할 것으로 예상되는 경우 아래에 설명된 이벤트 타이머 방법을 사용하십시오.

이벤트 흐름 사용 사례

다음은 몇 가지 일반적인 이벤트 흐름 사용 사례입니다.

- 에이전트 데이터.

- 인프라 에이전트 데이터.

- 자주 그리고 안정적으로 들어오는 제3자로부터 오는 모든 데이터.

- 대부분의 AWS CloudWatch 지표는 AWS 지표 스트림(폴링 아님)에서 나옵니다. 주요 예외는 일부 AWS CloudWatch 데이터가 스트리밍이든 폴링이든 상관없이 매우 드물다는 것(예: S3 볼륨 데이터)이며, 이 경우 Event timer 사용합니다.

이벤트 타이머를 사용하는 경우

이벤트 타이머 집계는 데이터 포인트가 도착하면 카운트다운하는 타이머를 기반으로 합니다. 새 데이터 포인트가 도착할 때마다 타이머가 재설정됩니다. 새 데이터 포인트가 도착하기 전에 타이머가 카운트다운되면 이벤트 타이머는 해당 시간 동안 수신된 모든 데이터 포인트를 집계합니다.

이벤트 타이머는 산발적으로 발생하고 시간 간격이 큰 이벤트에 대해 경고하는 데 가장 적합합니다.

이벤트 타이머 작동 방식

오류는 산발적으로, 예측할 수 없이 그리고 종종 큰 시간 간격으로 발생하는 이벤트 유형입니다.

예를 들어, 오류 수를 반환하는 쿼리가 있는 조건이 있을 수 있습니다. 오류 없이 몇 분이 지나다가 갑자기 1분 안에 5개의 오류가 발생합니다.

이 예에서 이벤트 타이머는 5개 오류 중 첫 번째 오류가 도착할 때까지 아무 작업도 수행하지 않습니다. 그런 다음 타이머를 시작하여 새 오류가 도착할 때마다 재설정합니다. 타이머 카운트다운이 새 오류 없이 0에 도달하면 이벤트 타이머가 데이터를 집계하고 임계값에 대해 평가합니다.

이벤트 타이머 사용 사례

다음은 몇 가지 일반적인 이벤트 타이머 사용 사례입니다.

- New Relic 사용 데이터.

- 폴링 중인 클라우드 통합 데이터(예: GCP, Azure 또는 AWS 폴링 방법 사용).

- 오류 수와 같이 희소하거나 드물게 발생하는 데이터를 전달하는 쿼리입니다.

운율

케이던스는 당사의 독창적인 집계 방법입니다. 데이터 타임스탬프에 관계없이 New Relic의 내부 벽시계가 감지한 특정 시간 간격으로 데이터를 집계합니다.

이벤트가 클록 왜곡에 취약하고 생산자가 이를 수정할 수 있는 권한이 없는 경우를 제외하고는 다른 집계 방법 중 하나를 대신 사용하는 것이 좋습니다.예를 들어 PageAction 이벤트는 사용자 브라우저에서 계측되며 사용자 기기 시계에 의존하여 타임스탬프를 할당합니다.왜곡된 타임스탬프가 있는 단일 이벤트는 이벤트 흐름이나 타이머 경고에 영향을 줄 수 있습니다. 윈도우가 너무 일찍 집계되어 가양성이 발생할 수 있기 때문입니다.

이 시나리오 외에는 다른 집계 방법 중 하나를 선택하는 것이 좋습니다. 이벤트 흐름은 일관되고 예측 가능한 데이터 포인트에 가장 적합합니다. 이벤트 타이머는 일관되지 않고 산발적인 데이터 포인트에 가장 적합합니다.

신호의 집계 및 손실

신호 시스템의 손실은 이러한 집계 방법 및 설정과 별도로 실행됩니다.

알림 조건을 신호가 10분 동안 끊겼을 때 새 알림 이벤트를 열도록 설정하면, 신호 끊김 서비스가 데이터 포인트 도착을 감시합니다. 10분 이내에 새로운 데이터가 도착하지 않으면 신호 손실로 인해 알림 이벤트가 발생합니다.

갭 채우기 및 단일 손실을 사용하는 경우에 대한 자세한 내용은 갭 채우기 및 신호 손실을 사용해야 하는 경우 에 대한 포럼 게시물을 참조하십시오.